A couple of important distinctions will help consultants like you create better client results and win more business by, ironically, lowering the quality bar on your work.

Last week’s article talked about perfectionism being a form of procrastination, and the importance of focusing on big wins while inuring yourself to little losses. (Last week’s article also referenced dinosaurs… as any good consulting blog should.)

The article was focused on marketing and infrastructure; nevertheless a couple of readers pointed out that purposely allowing errors in work bound for clients is not good consulting.

Fair enough. Let’s take a look at the role of perfectionism in our consulting output.

Many, many consultants are perfectionists. No matter how good their work is, in their eyes the gaps loom larger than the gains. Their rating scale is skewed, which is why I’ve provided the handy translation tool below.

Aiming for good work (on the perfectionist’s scale) actually robs your client of value. The pursuit of “good” incurs needless delays, during which disgruntled employees and customers leave, problems start to fester and compound, and value-building opportunities are missed.

Still, we’re in uncomfortable territory for many consultants. Therefore, let me give you two practical and concrete quality distinctions that you can apply to your consulting work:

Statistical Significance vs. Clinical Importance

Imagine the laboratory test results for a new cancer drug, called Chocalix: compared to current treatments, Chocalix significantly reduces the number of cancer cells. However, there’s absolutely no change in the percentage of patients who die from cancer, the duration of life, the symptoms, the quality of life, or anything else that the patients would notice. Chocalix would cost ten times as much as current products. Should patients be advised to switch to Chocalix?

No.

As a consultant, you can make your work more robust and, with time, develop ideas, recommendations, and plans that are theoretically better than those you produce fairly quickly. However, is the theoretical advantage clinically important?

Unless taking the time to improve your work product will make a meaningful, noticeable, positive difference in the client’s behavior or results, it’s not worth the additional time and effort.

Correct vs. Complete

Correct means you’re pointing your client in the right direction. Delivering correct, error-free work is paramount. Errors—even small errors—instill doubt in your consulting, your advice and your value. That’s why I’m a stickler for creating error-free client deliverables.

Delivering complete work is an entirely different truffle. Complete means adding information. It’s the consulting equivalent of adding decimal places to increase precision.

You can always pile on more information, expand your work, and investigate further. But will it change the trajectory of your client’s results or your recommendation? If not, don’t do it.

Correct vs. complete requires judgment. How much information is enough?

When I’m conducting interviews in B2B markets, I never let the first three or four interviews guide my opinions. On the other hand, if I conduct ten interviews with people I’d expect to have different opinions and they all say the same thing, I don’t feel compelled to conduct ten more.

These two distinctions share a common question: Will improving my work product meaningfully change the client’s results, behaviors, plans or actions?

Hitting the target now is usually worth more than hitting the bull’s-eye later.

Even better is a collaborative process in which you provide (woefully) incomplete information to the client and give them the opportunity to critique, collaborate, and direct the next round of work. That process prioritizes correctness and real-world effectiveness over completeness and theory.

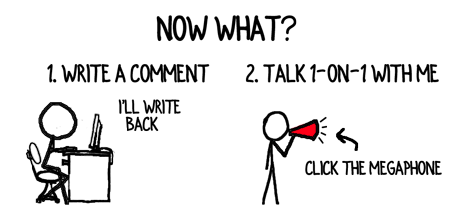

How else do you deliver high quality without falling into the perfectionist trap?

Text and images are © 2026 David A. Fields, all rights reserved.

David A. Fields Consulting Group

David A. Fields Consulting Group

This is spot-on, David. I am constantly fighting myself not to waste time to make the deliverable perfect, in the “error-free” sense. Clients do notice small errors, and it can call into question your entire deliverable. If they see sloppiness in the output, they lose confidence in the underlying facts. “Smokin” on your imperfectionist’s scale is the minimum quality in my view.

HOWEVER, good enough, when it comes to the facts, is truly good enough. And you can accelerate finding that ‘good enough’ by starting with some well-baked hypotheses and testing them, as opposed to starting from a clean slate and learning the answers. There is much written about deductive vs. inductive consulting, and I’m not going to go there … but if you combine smart interview or information gathering techniques with well-baked hypotheses, you can often get to ‘good enough’ efficiently, and then focus on the nuances of what you learn to deliver extraordinary value to your client. Let me share a quick example.

My field is the media industry. Reading a lot and spending every day in the sector, I hypothesized that a common industry challenge — in this case, related to cross-device identity — was going to be the top challenge on the minds of the interviewees during the discovery phase of a particular client project. As you suggest, hearing it from the first four of four interviews wasn’t enough, but by the time I got to the eighth, I knew this was going to be the top response. We interviewed a little more than 25 executives, but instead of repeating the same questions, I took the liberty starting number eight, to focus the interview on a seat of nuanced details relative to the problem, and then shifted toward solutions by the time I was speaking with the fourteenth or fifteenth interviewee. By the time I reached number 25, I not only had sufficient evidence as to the magnitude and commonality of the problem, I also had two very practical options to address it — and the buy-in of a full third of interviewees. This helped my client move from issue to action more quickly, and resulted in a year-long paid assignment for my consulting firm.

Many of us recognize the phase, “Perfect is the Enemy of the Good.” I never skimp on error-free, but I agree with David that there is no such things as perfectly complete, and that good works just find for that case.

David, you’ve surfaced an important issue for consultants: how to work efficiently without playing into confirmation bias. Starting with a hypothesis and testing it can be a useful shortcut and, as I’m sure you know and practice, it’s as important to try to disprove it as it is to find evidence that supports the hypothesis. Thank you for providing the concrete example of achieving a good, correct, meaningful result that didn’t go overboard.

David–

I couldn’t agree more with your post today. I’m involved in a practice called Innovation Engineering, and there we say: I don’t know; I need help; I fail a lot. The translation is that none of us knows everything, and other people may be helpful to attack the problem we’re working on, and we will try (experiment) with concepts and (fail) learn more from each attempt. The trick, of course, is to “fail” in manageable pieces, with low risk.

All of this is difficult with a typical consulting mindset that says, I need to be the knower of all things!

An approach that I use is to develop a concept for a project, and then share it widely among the client and other stakeholders and ask for advice and ways to improve. I find that I get a ton of new information, often about things I wouldn’t have known to ask about without this. It turns out that people think I’m smarter and a better consultant when I ask their opinion, than when I simply share my opinion first!

Thankfully, we don’t have to be omnipotent!

Kathy, this is perfect: “It turns out that people think I’m smarter and a better consultant when I ask their opinion, than when I simply share my opinion first!” That is true for delivering projects; it’s true for winning projects and it’s true for building relationships. The less we make consulting about us and the more we make it about others, the more success we’ll find. Thank you for sharing that brilliance.

Wow, that’s me, busted! And to say any more than that would not meaningfully change my behaviours, plans or actions.

Well, Emma, that was a perfectly concise, high-quality comment! (Funny, too.) Nice job refraining from adding more. I believe you’re well on your way to imperfection.